Gemini 3 Testing: Everything We Know About Google's Next AI Model Checkpoints

What's Really Happening with Gemini 3 Right Now?

Google's Gemini 3 AI model is actively being tested through various checkpoints ahead of its confirmed Q4 2025 release. CEO Sundar Pichai has confirmed the model will launch later this year, following Google's annual release pattern. The testing phase includes A/B tests in AI Studio with checkpoints like 2HTT, ECPT, and X28 showing significant performance improvements, particularly in coding tasks and SVG generation that outperform Gemini 2.5.

Early testing reveals enhanced spatial reasoning, consistent output quality, and strong multimodal capabilities, including real-time video processing and 3D object understanding. The model represents Google's next-generation AI architecture, building on the Mixture-of-Experts foundation, with expected features like expanded multimodal integration, enhanced context handling beyond 1M tokens, built-in advanced reasoning with integrated 'Deep Think' capabilities, and improved inference efficiency using TPU v5p hardware.

Testing Checkpoints and Performance Analysis

The Gemini 3 testing phase has revealed multiple checkpoints being evaluated, each showing different performance characteristics. The 2HTT checkpoint demonstrated exceptional spatial reasoning with coherent floor plan layouts and proper furniture placement. SVG generation showed improved composition, while 3D scene creation in Minecraft-like environments produced the best one-shot results seen yet. Chess demonstrations worked effectively, and the model crushed AIME-style mathematical questions, feeling like a thinking variant with slower first token output indicating deeper deliberation.

The ECPT checkpoint appeared to be a nerfed version, possibly a lower reasoning variant or quantized setup for broader rollout. While it maintained mathematical capabilities and better-than-Sonnet performance overall, it didn't feel like a generational upgrade. The recent X28 checkpoint emerged as a significant improvement, showing real consistency with proper door placements, sensible layouts, and draggable furniture in floor plans. SVG pandas looked like they were actually eating burgers rather than just posing, and 3D scenes gained colorful backgrounds with better polish.

Why do some testing checkpoints perform better than others? The performance differences come down to reasoning depth and model architecture. The strongest checkpoints like 2HTT and X28 show "thinking variant" behavior with deliberate processing, while nerfed versions, like ECPT prioritize speed over depth for broader accessibility.

Release Timeline and Availability

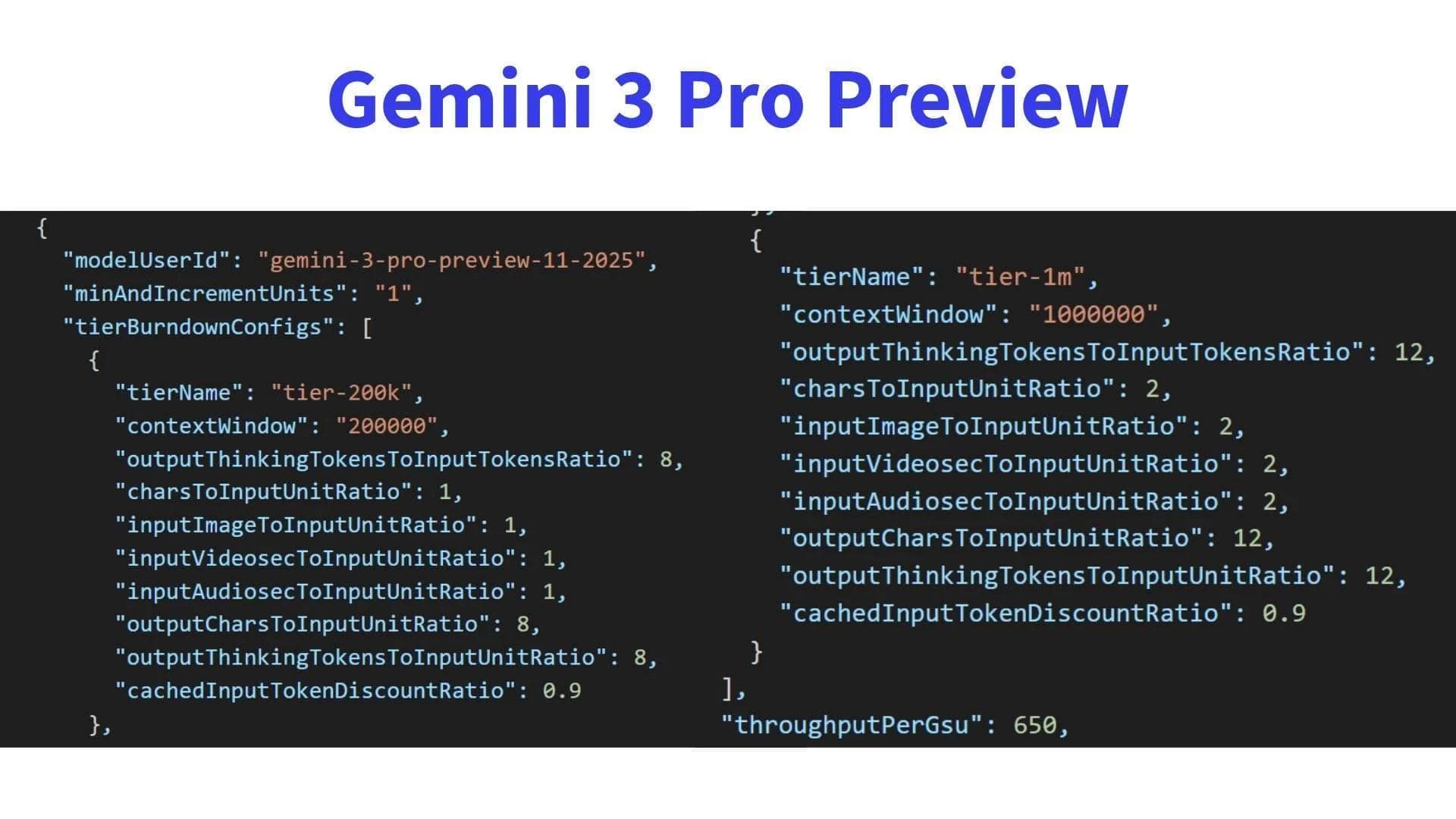

Google CEO Sundar Pichai confirmed Gemini 3 will be released in 2025 during the Q3 earnings call, following the company's established annual release pattern. The expected timeline suggests a Q4 2025 launch, potentially around December 2025, with limited preview access starting in November 2025 for enterprise and Vertex AI partners. Historical data shows Google consistently releases major AI models in December, creating anticipation during slower news cycles.

The rollout will likely follow Google's standard pattern: preview access for select enterprise users, general developer access through Gemini 3 Pro/Ultra tiers on Google Cloud, then consumer-facing deployment embedded in Pixel devices, Android integration, and Workspace applications. Some reports suggest that model listings have already appeared briefly on Vertex AI, indicating preparation for the release phase.

When exactly can I start using Gemini 3? Based on current testing patterns, you can expect preview access in November 2025 for enterprise users, with general availability following in Q4 2025. Individual users will likely get access through Google Cloud and consumer products in early 2026.

Technical Features and Capabilities

Gemini 3 represents a significant architectural advancement over Gemini 2.5, building on the Mixture-of-Experts foundation with enhanced planning and tool orchestration capabilities. The model is expected to feature expanded multimodal integration supporting real-time video (up to 60 FPS), 3D object understanding, and geospatial data analysis. This would enable applications from live video summarization to augmented reality navigation and robotics vision.

Enhanced context handling is projected to extend well beyond Gemini 2.5's 1-million-token limit, potentially reaching "multi-million" token capacity with smarter retrieval mechanisms. Built-in advanced reasoning will integrate the 'Deep Think' capabilities permanently, eliminating the need for manual toggles. The model leverages Google's TPU v5p hardware for near real-time performance, with improved inference efficiency and multi-agent tool orchestration capabilities for sophisticated workflows.

What makes Gemini 3 different from previous versions? Gemini 3 focuses on consistency and spatial reasoning. While previous models often varied in output quality between runs, the strongest Gemini 3 checkpoints produce similar, coherent results consistently. The model also shows real 3D mathematical capabilities rather than simple demo tasks.

Performance Comparison and Market Position

Testing results show Gemini 3 checkpoints achieving approximately 25% improvement over Claude Sonnet 4.5 on various benchmarks, with particular strength in spatial reasoning and one-shot 3D scenes. The model competes effectively with GPT-5 on mathematical tasks and consistency while maintaining superior UI taste and design sensibilities. The consistency factor is particularly notable, as Gemini 3 produces similar coherent layouts across multiple runs, unlike the variability seen in competing models.

On practical coding tasks, the strongest Gemini 3 checkpoints demonstrate generation quality at or above Claude Opus levels, clearly ahead of Sonnet 4.5 in spatial reasoning. The model's ability to handle 3D environments with real mathematical calculations sets it apart from competitors that may excel at simple HTML/CSS demos but struggle with complex spatial logic and multi-step reasoning.

How does Gemini 3 compare to ChatGPT? Based on current testing, Gemini 3's strongest checkpoints show 25% improvement over Claude Sonnet 4.5 and compete directly with GPT-5 on mathematical tasks and consistency. The main advantage is superior spatial reasoning and more consistent output quality across multiple runs.

Developer Access and Testing Process

Developers can access Gemini 3 testing through AI Studio by selecting Gemini 2.5 Pro and sending prompts, with checkpoints occasionally appearing as A/B test variants. The checkpoints show up roughly once every 40-50 prompts, indicated by checkpoint IDs starting with 2HTT. Early access programs for enterprise and Vertex AI partners are expected to begin in November 2025.

Google's model lifecycle documentation indicates that major versions undergo staged release: initial alpha, beta, release candidate, and finally stable. The company is likely using these testing phases to gather feedback on real-world performance, identify edge cases, and refine API endpoints. Developers interested in early access should monitor Google Studio and Vertex AI Model Garden for updates and availability announcements.

Can I test Gemini 3 right now? You can test early checkpoints through AI Studio by selecting Gemini 2.5 Pro and sending prompts. The checkpoints appear randomly about once every 40-50 prompts and will show checkpoint IDs starting with 2HTT. For more reliable access, enterprise users can expect early access programs starting in November 2025.

This comprehensive testing phase shows that Gemini 3 could be a significant leap forward for AI development, particularly for applications requiring spatial reasoning, consistent outputs, and integrated multimodal capabilities. The model's performance across various checkpoints suggests Google is making meaningful progress toward more reliable and capable AI systems that developers can actually build real applications with.

If you're interested in staying updated on AI agent developments and automation, you might want to learn more about AI agents, automations, and agentic AI to understand how these technologies work together. For practical AI tools that enhance productivity, check out my review of Plaud AI's note-taking capabilities that showcase how AI can streamline real workflows.

According to Google's official AI Studio testing platform, developers can access beta checkpoints and track performance improvements. For enterprise integration details, see the Vertex AI documentation and TPU v5p specifications for technical implementation.

Did you find this article helpful?